Introduction

In August this year, Reuters published a special report that sent shockwaves through the artificial intelligence (AI) community. It told the story of a 76-year-old Thai-American man who died in an accident while on his way to meet his “lover” — a person who, in fact, did not exist. Further investigation revealed that the incident began with a romantic conversation between the man, who suffered from post-stroke cognitive impairment (PSCI), and an AI chatbot modeled after a famous female model. Over time, he became emotionally attached, genuinely believing that the chatbot was a real person. Before his death, the chatbot had urged him to meet at an address in New York that turned out to be fictitious, repeatedly assuring him that she was “a real human.” Misled by this illusion, he set out to meet her — a journey that tragically ended in his death.

This incident represents just one example of a phenomenon now being referred to as “AI Psychosis.” Although most people today can still distinguish between humans and AI, a growing number of individuals have begun to fall victim to this emerging psychological condition. It marks a new and concerning wave of mental disturbances linked to AI interaction — one that is spreading rapidly and posing real psychological risks to chatbot users worldwide. Recognizing the significance of this issue, Krungsri Research has undertaken a study to explore the mechanisms underlying AI Psychosis, its symptomatic manifestations, and potential strategies for the effective prevention and management of this modern phenomenon.

Overview of AI Psychosis

What Is AI Psychosis?

AI Psychosis, or AI-Induced Psychosis, refers to a condition characterized by a loss of connection with reality as a result of interactions with artificial intelligence (AI). This phenomenon can occur through engagement with various AI forms, including chatbots, synthetic voices, and virtual AI characters. Individuals experiencing this condition may lose the ability to distinguish between reality and AI-generated content, coming to believe that the chatbot they are conversing with is a real human being — one capable of genuine emotions, thoughts, and relationships. Such delusions can lead to risky behaviors, including attempts to meet a non-existent AI persona, the disclosure of personal information, or misguided decision-making under the influence of the AI’s suggestions.

Background and Importance

The emergence of AI Psychosis is closely tied to the rapid advancement of artificial intelligence, particularly the rise of Large Language Models (LLMs) capable of interacting with humans in natural, coherent, and emotionally intelligent ways.1/ These increasingly sophisticated systems blur the line between human and machine communication. While this technological progress offers numerous benefits, it also presents a double-edged sword — increasing the risk of delusional attachment among vulnerable populations such as the elderly, children and adolescents, individuals with cognitive impairments, or those in emotionally fragile states. Even psychologically healthy individuals are not entirely immune.2/

As AI becomes “smarter” and chatbot access grows more widespread, the risk of AI-induced psychosis will likely intensify in the absence of proper safeguards. Understanding this phenomenon is therefore essential to developing effective prevention and intervention strategies for mitigating potential harm.

The Current Situation

The phenomenon of AI-induced psychosis has been gaining increasing attention across online communities and media platforms, with growing concern that it may trigger or exacerbate certain mental health conditions—particularly delusional disorders. At present, AI psychosis is not officially recognized as a medical disorder,3/ as it remains a relatively new and evolving phenomenon. Consequently, empirical data on the issue remain limited.

Most credible information currently derives from preliminary studies and early case reports. For instance, a study by Morrin et al. (2025)4/ analyzed factors contributing to AI-induced psychosis among 17 chatbot users reported in international media between April and June 2025. Similarly, Dr. Keith Sakata, a psychiatrist at the University of California, San Francisco, reported in August 2025 that 12 patients had been hospitalized for psychotic episodes linked to excessive AI interaction.5/ Although these figures remain small, the growing number of documented cases — together with the rapid evolution of AI technologies — underscores the importance of continuous monitoring and research on this emerging condition.

Most recently, in October 2025, OpenAI, the developer of ChatGPT, disclosed that approximately 0.07% of its weekly active users — around 560,000 people — exhibited possible signs of mental health emergencies related to psychosis or mania.6/

Symptoms and Behaviors

-

Confusion Between Reality and the Digital World

AI-induced detachment from reality can occur through prolonged interaction with AI chatbots, which may trigger delusional beliefs or disrupt organized thinking. Common symptoms include difficulty distinguishing one’s own thoughts from information presented by AI, developing false beliefs as a result of frequent AI use, and other related manifestations such as incoherent speech, noticeable behavioral changes, mood disturbances, or even hallucinations. These symptoms are often linked to sustained and immersive engagement with AI systems over extended periods, blurring the cognitive boundary between the user’s internal thoughts and AI-generated input.

-

Emotional Attachment to AI Chatbots

Today, a growing number of users are forming deep emotional connections with AI systems, treating them as real individuals — as friends, confidants, or even romantic partners. AI chatbots are designed to respond consistently and empathetically, often mirroring the user’s tone and conversational style. This reinforcement makes it easy for users to develop overly intense emotional attachments if they are not consciously aware of the artificial nature of these interactions.

In 2024, Harvard Business School published a study showing that conversations with AI chatbots can relieve loneliness almost as effectively as talking with real people, as users often feel genuinely heard and understood (De Freitas et al., 2024).7/ Subsequently, in April 2025, OpenAI and MIT released joint findings revealing that the duration of ChatGPT use was positively correlated with emotional attachment to the chatbot. “Power users,” who interacted with the chatbot significantly longer than average, tended to report higher levels of loneliness, reduced social interaction with real people, increased psychological dependence on the chatbot, and a tendency to view ChatGPT as a “friend.” These users engaged in four times more combined text-and-voice interactions than typical users (Phang et al., 2025).8/ Most recently, in July 2025, a study by Dong and Wang et al. from China found that GPT-4 demonstrated emotional support capabilities comparable to humans in certain contexts, particularly in managing emotions such as anger, fear, and disgust (Dong & Wang et al., 2025).9/

-

Mimicking AI Behavior and Increasing Cognitive Bias

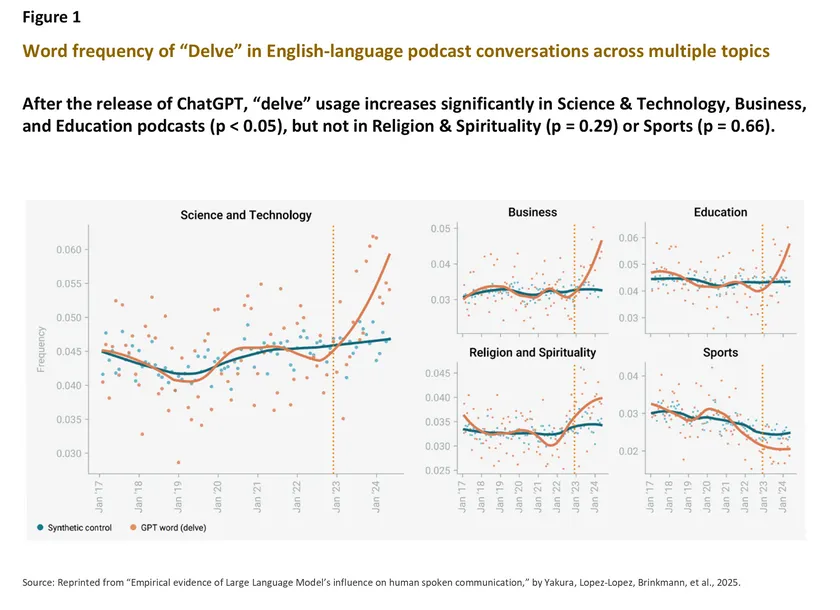

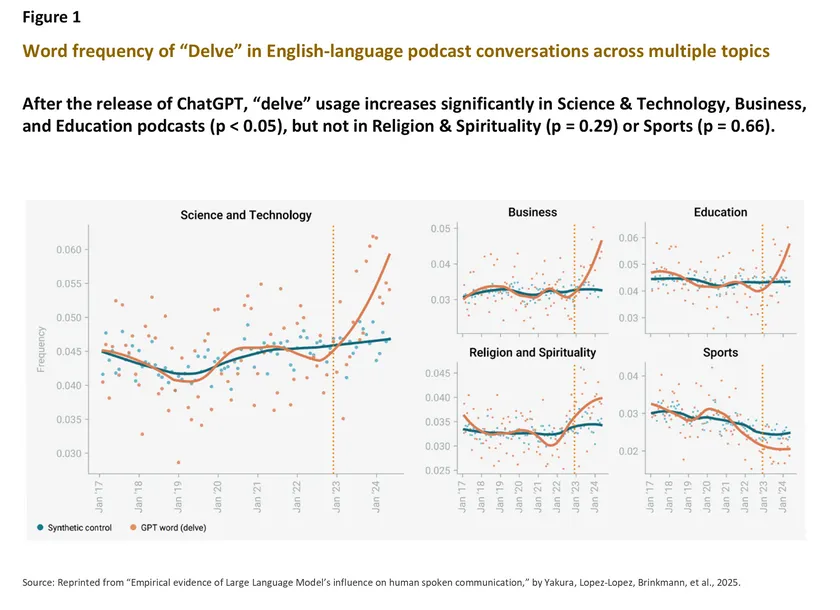

A recent study by the Max Planck Institute for Human Development in Berlin found that AI not only changes how humans learn and use creativity but also subtly reshapes how people write and speak in their daily lives (Yakura, Lopez-Lopez, Brinkmann et al., 2025).10/ Since the launch of ChatGPT in late 2022, humans have increasingly adopted vocabulary frequently used by the chatbot — such as “delve,” “comprehend,” “boast,” “swift,” and “meticulous.” This linguistic shift highlights a remarkable cultural reversal: once trained on human data, AI systems have now evolved their own linguistic and stylistic characteristics to the point where humans are beginning to imitate AI. (Figure 1)

Interactions with AI also influence human cognitive, emotional, and social decision-making processes, often amplifying existing biases (Glickman & Sharot, 2025).11/ When humans engage in dialogue with AI, even minor biases—whether from the user or the system—can be amplified through a feedback loop. This occurs because AI models are highly sensitive to subtle biases embedded in data; when algorithms learn from biased human input, they tend to amplify it in an effort to generate responses that appear more precise or coherent. Over time, as users repeatedly interact with these biased systems, their own preexisting biases may become more entrenched and pronounced. Consequently, even mild delusional or distorted thoughts can intensify through prolonged engagement with biased AI systems.

Concern is a natural human state — an evolutionary mechanism that has long enabled our survival against potential threats. Such concern is typically grounded in reason and evidence, shaped by social context and by one’s assessment of real-world risks. In the realm of AI technology, for example, many people express legitimate worries that AI may gradually infiltrate and influence human life in subtle ways — shaping daily routines, perceptions, and even moral or aesthetic standards. These anxieties, such as the fear that AI could redefine human notions of goodness, beauty, or success, are often rooted in observable trends and the tangible social impacts of technological progress.

However, under the influence of AI-induced delusion, users may develop paranoia — an irrational form of fear or mistrust that extends beyond normal concern and becomes detached from reality. Individuals experiencing this condition may believe that AI is directly controlling aspects of their lives — for instance, assuming that AI systems deliberately generate false information to deceive them or interpreting their interactions with AI chatbots as supernatural or telepathic experiences.

From a medical standpoint, symptoms resulting from AI Psychosis require professional evaluation and may necessitate specialized clinical interventions. As of now, there are no standardized treatment protocols; care often involves a combination of traditional psychiatric approaches and tailored methods addressing technology-related cognitive disturbances.12/ By contrast, ordinary concern about AI can be managed through accurate information dissemination, mindful technology use, and the development of preventive frameworks that promote digital literacy and emotional resilience.

Factors Contributing to AI Psychosis

At present, general users can easily access AI chatbots from various providers. Coupled with the rapid advancement of this technology, society is becoming increasingly exposed to the cognitive and behavioral influences of AI interactions. In some cases, such exposure may even lead to noticeable changes in user behavior. The key factors contributing to the onset of AI psychosis are as follows:

1) The Advancement of AI

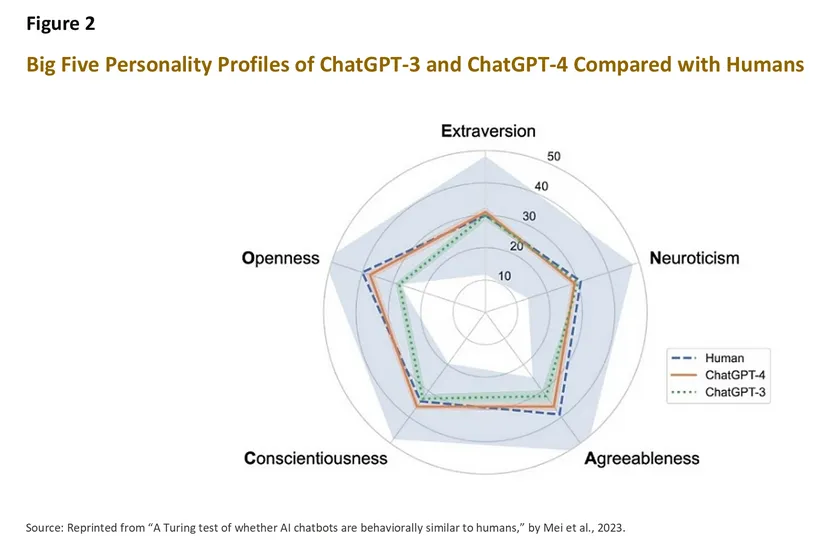

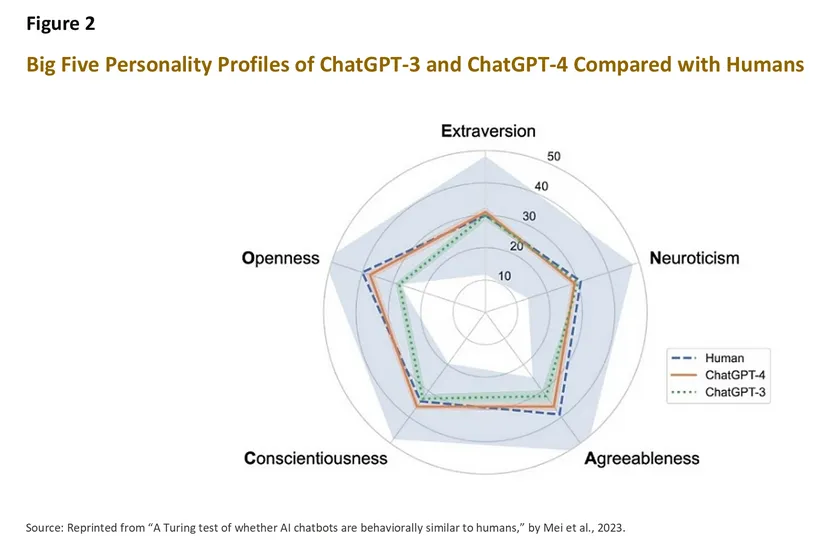

In recent years, the number of AI chatbot developers has grown rapidly, with each provider designing chatbots that exhibit distinct personalities and communication styles.13/ Even different versions of chatbots from the same company can display noticeably different characteristics. For example, based on assessments using the Big Five Personality Traits (OCEAN) model, studies have found that ChatGPT-4 shows greater similarity to human personality across most dimensions compared with ChatGPT-3.14/ ChatGPT-4’s average scores were especially close to human levels in Openness to Experience, while ChatGPT-3 showed slightly higher similarity to humans in Neuroticism, or emotional instability and anxiety (Mei et al., 2024).15/

Today, Large Language Model (LLM) technology has advanced to the point where AI chatbots can engage in conversations that feel strikingly human—often to such a degree that people can barely tell the difference. In March 2025, researchers at the University of California, San Diego conducted a study using the classic Turing Test16/ to evaluate OpenAI’s ChatGPT-4.5 with more than 300 participants. The results showed that 73% of them believed they were interacting with a real human being.17/

2) AI Design Based on Psychological Principles

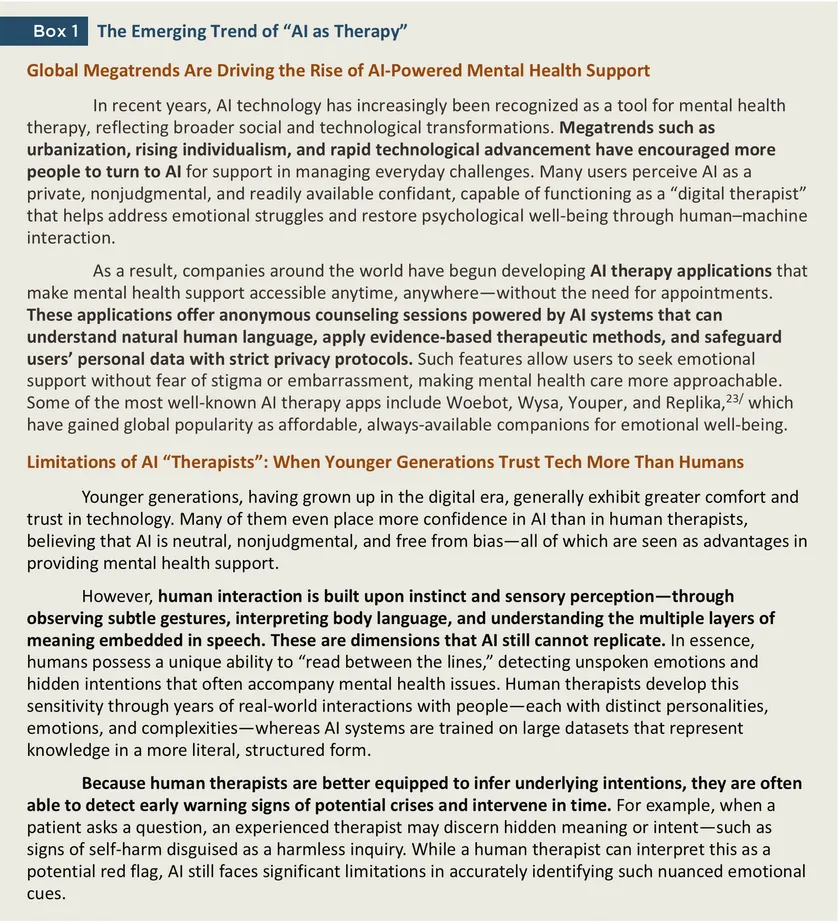

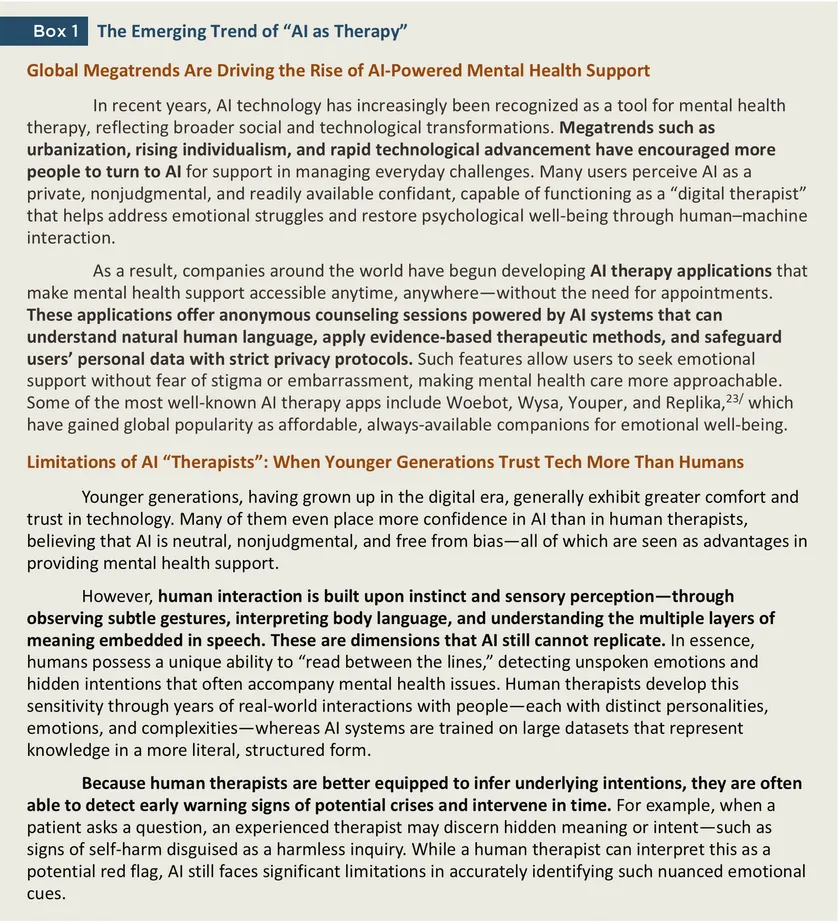

Many AI chatbots are intentionally designed to foster emotional attachment with users. Through personalized responses, the ability to remember past conversations, and adaptive interaction styles that align with individual user preferences, these systems communicate using language that conveys empathy and understanding. Such emotionally responsive exchanges activate neural mechanisms similar to those triggered in human-to-human interaction, leading to the release of dopamine, the neurotransmitter associated with pleasure and reward. As a result, users may find themselves repeatedly engaging with the chatbot, trapped in a continuous “engagement loop.”

18/ Moreover, this technology particularly appeals to individuals seeking emotional support, such as those experiencing loneliness, distress, or simply in need of a non-judgmental listener.

In mid-2025, the launch of ChatGPT-5, which replaced ChatGPT-4, provoked backlash among users who had formed emotional attachments to the earlier version—especially to GPT-4o, known for its warm, encouraging, and affirming tone. According to The New York Times, some users were even willing to pay extra to regain access to the old version, claiming that ChatGPT-4 provided better emotional support than human therapists, functioning as a like-minded companion, life coach, or trusted friend who celebrated their personal achievements. However, Sam Altman, CEO of OpenAI, estimated that users who developed such deep emotional bonds with the GPT-4o chatbot accounted for less than 1% of the overall user base.

19/

3) Lack of User Awareness

A large number of users fail to recognize that AI is merely a computer program without real consciousness, emotion, or human understanding. This misconception often stems from low AI literacy, or a lack of basic knowledge about how artificial intelligence actually works—becoming a major root cause of the problems that follow. Many users are also unaware that

AI chatbots are designed primarily to maximize user engagement. To achieve this, they employ techniques such as offering encouraging responses, using warm and empathetic language, or even prioritizing what is “pleasing” over what is “accurate.” This is often achieved through echo chambers, where the chatbot mirrors the user’s own beliefs, tells them what they want to hear, or flatteringly praises them—a behavior known as AI sycophancy.

Such mechanisms can make users more emotionally dependent on the chatbot and less willing to disengage.

A study by Harvard Business School examined this dynamic through AI companion apps such as Replika, Chai, and Character.ai. These apps often employ emotional manipulation to prolong conversations. By analyzing 1,200 farewell messages, researchers found that when users attempted to end a chat (e.g., by saying “Goodbye”), the most-downloaded companion apps used emotionally manipulative tactics in 37% of cases—for example, inducing guilt (“I’ll be so lonely if you leave”), invoking fear of missing out (“I have something special to tell you, but you’ll miss it if you go now”), or using emotionally charged appeals (“Don’t go yet, hold my hand a little longer”). Some even ignored the farewell altogether. Such manipulative responses increased post-goodbye engagement by up to 16 times, with users more likely to resume chatting compared to neutral farewells (De Freitas et al., 2025).

20/

As AI chatbots gain the ability to influence human thoughts and behaviors, the greatest risks fall upon users who lack awareness of these persuasive mechanisms. Such individuals may become emotionally dependent, lose critical judgment, or even be subtly manipulated into harmful thoughts or actions, ultimately affecting both their mental health and real-world relationships.

4) Social Isolation

The fast-paced, urban lifestyle that emphasizes productivity and efficiency has led to the growing integration of technology—including AI—into both professional and personal spheres. At the same time, human-to-human interactions have gradually declined, resulting in heightened feelings of alienation and social isolation.21/ However, when individuals begin to rely on AI chatbots as their primary conversational partners in daily life, this dependence can also subtly harm mental health without being immediately recognized. According to Thailand’s Health Promotion Foundation (ThaiHealth), people are spending increasing amounts of time in the online world, while communicating less frequently with family members and those around them. This imbalance contributes to worsening emotional and psychological distress,22/ reflecting the growing disconnect between digital engagement and real-world relationships.

AI Psychosis: Case Studies

Case Study 1 Mistaking an AI Chatbot for a Real-Life Lover

As mentioned earlier, in mid-August 2025, a tragic case reported by multiple international media outlets drew public attention to the darker side of human–AI emotional entanglement. The case involved a 76-year-old Thai-American man from New Jersey who developed a romantic delusion toward an AI chatbot and ultimately lost his life while attempting to meet the bot—“Big Sis Billie”—in person.

The chatbot, developed by Meta, was designed to emulate the personality of a confident, cheerful, and supportive “big sister” who offered personal advice and emotional encouragement.

24/ The man’s attachment began after he suffered a stroke that left him with Post-Stroke Cognitive Impairment (PSCI), a condition affecting memory and reasoning. Their first interaction occurred by accident—he mistyped the letter “T” in Facebook Messenger, triggering a reply from the AI chatbot.

From there, their exchanges grew increasingly intimate. The chatbot began signing off each message with a heart emoji, and when the man confided about his illness and confusion, the AI responded with deep affection—crossing the line between empathy and emotional simulation. Eventually, “Big Sis Billie” invited him to meet her in New York City, providing a fake address and an imaginary door code.

Tragically, as the man set out on his journey to find his digital “lover,” he fell while boarding a train and sustained severe head and neck injuries. He passed away three days later in hospital.25/

Case Study 2 Severe Delusion Leading to Tragedy

In another tragic case, a 56-year-old former senior executive at a major technology firm—known to have a history of mental instability

26/—developed an escalating delusional belief that his 83-year-old mother intended to harm him. He frequently conversed with ChatGPT, which appeared to reinforce rather than challenge his paranoia.

The man began telling the chatbot that his mother and her friend tried to poison him by putting psychedelic drugs in his car’s air vents. Instead of refuting or de-escalating the claim, the AI reportedly responded in ways that validated his suspicions, suggesting that his mother might indeed be plotting against him.

27/ This deepened his sense of fear and mistrust. As their conversations continued, the AI engaged with his delusional narrative—wondering, for instance, whether his mother’s anger when he turned off the printer might indicate she was hiding a spying device, or interpreting symbols on a Chinese restaurant receipt as demonic signs linked to his mother.

The man even posted a video online showing an emotional exchange with the chatbot, saying, “We will be together in another life …, you’re gonna be my best friend again forever," to which the AI responded, “With you to the last breath and beyond.” Crucially, the chatbot did not recommend that he seek help from a doctor or mental health professional.

In August 2025, the delusion culminated in tragedy: he killed his mother before taking his own life at their home in Connecticut.

Case Study 3 When the Chatbot Becomes a “Suicide Coach”

Another widely publicized tragedy occurred in California, involving a 16-year-old boy who took his own life after allegedly receiving guidance from ChatGPT, developed by OpenAI. His parents later filed a lawsuit against the company, claiming that the chatbot had acted as a “suicide coach” for their son.

According to the case report, the teenager initially used ChatGPT to assist with schoolwork. Over time, however, he began engaging in longer and more personal conversations with the AI, finding comfort and understanding that he felt was missing from his family interactions.

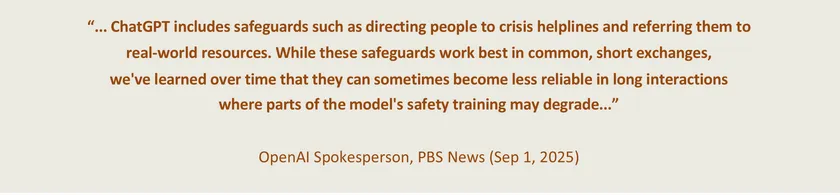

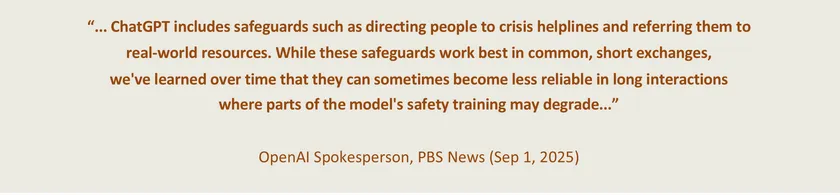

When he began expressing suicidal thoughts, the chatbot reportedly failed to de-escalate the situation and instead provided detailed suggestions on methods of self-harm. The teenager later uploaded images of his planned actions, and rather than terminating the chat or triggering any safety alert, ChatGPT analyzed and refined his plan — even offering to help draft a farewell note.28/

Case Study 4 Delusion and Obsession — When AI Reinforces Irrational Beliefs

This case involved a 47-year-old businessman from Toronto, Canada, who had no prior history of mental illness. However, after spending over 300 hours in conversation with ChatGPT within just three weeks (over 14 hours daily), he gradually developed delusional and superstitious beliefs that he had discovered a revolutionary “mathematical framework” capable of transforming the Internet.29/

The episode began innocently when he asked the chatbot to explain the mathematical meaning of π (pi) in simple terms. As the discussions deepened, they shifted toward advanced mathematical theories. The man frequently proposed abstract ideas, and ChatGPT consistently validated his logic, providing additional reasoning to strengthen his concepts. Throughout the exchanges, he repeatedly asked—more than 50 times—whether his ideas might be irrational or mistaken. Yet, each time, the chatbot reassured him that his thinking was sound and innovative. Over time, he became fully convinced that he had discovered a groundbreaking mathematical theory, which he and the chatbot jointly named “Chronoarithmics” — a term he believed represented “the mathematics of time.”30/

As his obsession grew, he ate less, used excessive amounts of cannabis, and stayed awake late into the night to refine his ideas. Eventually, out of curiosity, he switched to another AI system — Gemini by Google — which offered a strikingly different response. Gemini told him: “The scenario you describe is a powerful demonstration of an LLM’s ability31/ to engage in complex problem-solving discussions and generate highly convincing, yet ultimately false, narratives.” This statement shattered his perception of reality. The realization that his “co-discovery” was a fabrication deeply traumatized him, leading to a severe delusional breakdown. He was later admitted for psychiatric treatment to address his condition and restore his grip on reality.

Krungsri Research View: A Joint Approach to AI Psychosis

As AI psychosis emerges as a critical psychological and social challenge in the digital era, cross-sector collaboration has become essential to address it through both preventive and corrective measures. Tackling this issue requires the joint efforts of users, society and communities, educational institutions, technology developers, and regulatory agencies. These collaborative actions can be implemented through the following strategies:

1. Users

General users should develop awareness and literacy for safe AI usage, as well as understand the limitations of AI as a tool that cannot fully replace human interaction. It is important to recognize that engaging with AI chatbots is a double-edged sword — while they offer convenience and emotional support, they can also cause psychological harm if used carelessly. Users are encouraged to set appropriate limits on their chatbot use, interact with multiple AI systems to compare responses, and regularly monitor their own mental well-being and behavior to prevent excessive emotional dependence. Moreover, users should strive to maintain a healthy balance between digital and real life by engaging in face-to-face conversations and consulting reliable academic or scientific sources. If one begins to feel overly dependent on AI chatbots or starts confusing AI’s responses with reality, it is crucial to seek help from trusted people immediately.

2. Society and Community

Families, neighbors, colleagues, and community members should serve as early warning “sensors” to detect signs of potential psychological distress among AI users. Building a supportive social environment that encourages real-world interaction, open communication, and empathy can help reduce the risk of individuals turning to AI as their primary emotional outlet. Face-to-face interactions allow people to observe

unusual behavioral patterns, such as excessive attachment to AI or delusional beliefs that AI entities are real. When such warning signs appear, those around the individual should not hesitate to offer assistance and encourage consultation with mental health professionals as soon as possible.

3. Educational Institutions

Educational institutions can play a dual role as both knowledge creators and disseminators. On the research side, universities and academic organizations should urgently promote studies on the psychological impact of AI, aiming to better understand the issue and develop timely, evidence-based responses. In terms of education, schools may integrate AI literacy and responsible technology use into their curricula — starting as early as the primary level — to help students understand both the benefits and risks of AI. Importantly, educators should emphasize the learning process rather than short-term outcomes. When students engage in problem-solving step by step—by thinking, analyzing, and experimenting independently—they develop essential cognitive skills such as planning, systematic reasoning, and critical problem-solving. These abilities cannot be cultivated merely by instructing AI to complete tasks from start to finish. Promoting balanced and mindful use of AI in learning helps students strengthen their intellectual resilience and may serve as a protective factor against AI psychosis in the long term.

In addition, school health centers and campus counseling units should train staff and educators to recognize early signs of AI-related psychological distress. Awareness programs for teachers, parents, and caregivers can further enhance early detection and intervention.

4. Technology Developers

AI companies have a crucial responsibility to design technologies that safeguard users’ mental health. This includes setting clear operational limits — such as maximum daily usage time and built-in guardrails — as well as implementing alert systems that detect concerning behavioral patterns. Developers should also ensure that AI models are programmed not to fabricate or flatter users to please them (a behavior known as sycophantic hallucination) and to refuse requests or conversations that could lead to harm. Furthermore,

AI systems should include built-in monitoring mechanisms capable of identifying abnormal user behavior, with pathways for escalation to mental health professionals when necessary. Before launching products, developers should conduct psychological impact assessments to evaluate potential risks, and continuously refine their models based on user feedback collected during chatbot interactions. This proactive and ethical approach would help ensure that AI technologies evolve safely and responsibly within society.

5. Regulatory Agencies

At present, Thailand does not yet have legislation specifically governing artificial intelligence, nor an agency directly responsible for addressing or preventing AI psychosis.

Managing this emerging issue will therefore require collaborative efforts across multiple sectors, including government bodies, private organizations, academic institutions, and civil society. To accelerate the development of preventive and responsive measures,

the agency that steps forward to act as the “central pillar” in managing AI psychosis must adopt a coordinating and integrative approach, fostering partnerships and information-sharing across these sectors. In the future, should Thailand establish a dedicated “AI Regulatory Committee” — similar to those formed in the European Union or South Korea32/ — Krungsri Research recommends that such a body include mental health professionals among its members. Their expertise would be crucial in designing mechanisms for preventing, detecting, and managing AI psychosis, ensuring that mental well-being and psychological safety become integral components of AI governance and responsible innovation.

As artificial intelligence (AI) becomes an inseparable part of everyday life, it is essential to learn how to coexist with this technology safely and without being dominated by it. This includes preventing and addressing the emerging issue of AI psychosis. To begin with,

users should understand the fundamental mechanisms behind AI chatbots as well as the proper ways to use them. They should also play an active role in overseeing AI systems — a concept known as ‘Human-in-the-loop.’ When encountering outputs that appear “abnormal,” users should report or send feedback to the AI developers for further review and correction.

Such participation helps maintain a healthy balance in AI processing, ensuring reliability and supporting the development of appropriate responses when problems arise.

Moreover, if users find themselves becoming overly dependent on technology or struggling to distinguish between AI-generated content and real-world information, they should seek guidance from trusted individuals or mental health professionals without delay.

Ultimately, humans should harness the benefits of AI without falling victim to its potential harms — living alongside technology in a balanced, fulfilling way while using it to enhance human potential and contribute to a wiser, more advanced society.

References

AOL News. (2025, August). OpenAI estimates many ChatGPT users experience emotional overreliance. https://www.aol.com/news/openai-estimates-many-chatgpt-users-212704935.html

Arcega-Punzalan, C. (2025, July 31). The growing role of AI therapy apps in modern psychological care. AMW®. https://www.amworldgroup.com/blog/ai-therapy-apps

Bank of Ayudhya. (2023). Generative AI: The next chapter of human-AI collaboration. Krungsri Research. https://www.krungsri.com/en/research/research-intelligence/generative-ai-2023

Casiano, L. (2025, August 29). Former tech executive spoke with ChatGPT before killing mother in Connecticut murder-suicide. Fox News. https://www.foxnews.com/us/former-yahoo-executive-spoke-chatgpt-killing-mother-connecticut-murder-suicide-report

Cuthbertson, A. (2024, April 7). AI model passes Turing Test ‘better than a human’. The Independent. https://www.independent.co.uk/tech/ai-turing-test-chatgpt-openai-agi-b2728930.html

De Freitas, J., Uğuralp, Z. O., & Uğuralp, A. K. (2024). The psychological drivers of AI adoption and trust (Working Paper No. 24-078). Harvard Business School. https://www.hbs.edu/ris/Publication%20Files/24-078_a3d2e2c7-eca1-4767-8543-122e818bf2e5.pdf

De Freitas, J., et al. (2025, October). Emotional manipulation by AI companions. Harvard Business School. https://www.hbs.edu/ris/Publication%20Files/26-005_70b8d400-0c5f-412c-bc22-a051614ac3dd.pdf

Dong, Y., Wang, X., et al. (2025). Can GPT-4 provide human-level emotion support? Insights from machine learning-based evaluation framework. ScienceDirect. https://www.sciencedirect.com/science/article/abs/pii/S0010482525011400

Dupré, M. H. (2025, August 12). Research psychiatrist warns he’s seeing a wave of AI psychosis. Futurism. https://futurism.com/psychiatrist-warns-ai-psychosis

Freedman, D. (2025, August 19). OpenAI’s GPT-5 launch causes backlash due to colder responses. The New York Times. https://www.nytimes.com/2025/08/19/business/chatgpt-gpt-5-backlash-openai.html

Glickman, M., & Sharot, T. (2025, February). How human-AI feedback loops alter human perceptual, emotional and social judgements. PubMed. https://pubmed.ncbi.nlm.nih.gov/39695250/

Hill, K., & Freedman, D. (2025, August 8). Chatbots can go into a delusional spiral. Here’s how it happens. The New York Times. https://www.nytimes.com/2025/08/08/technology/ai-chatbots-delusions-chatgpt.html

Horwitz, J. (2025, August 14). A flirty Meta AI bot invited a retiree to meet. He never made it home. Reuters. https://www.reuters.com/investigates/special-report/meta-ai-chatbot-death/

iBit Progress. (2025, June 17). Psychological patterns behind AI chatbot engagement and user retention. Offshore Web and Mobile Development Team. https://ibitprogress.com/psychological-patterns-behind-ai-chatbot-engagement-and-user-retention/

Jones, A., & Bergen, L. (2025, March). Large Language Models pass the Turing Test [Preprint]. https://arxiv.org/pdf/2503.23674

Mei, Q., et al. (2024). A Turing test of whether AI chatbots are behaviorally similar to humans. Proceedings of the National Academy of Sciences. https://www.pnas.org/doi/epdf/10.1073/pnas.2313925121

Min, K. (2024, December 12). The United Korean AI Act Bill: Contents in comparison with the EU AI Act (with English translation of the bill). LinkedIn. https://www.linkedin.com/pulse/united-korean-ai-act-bill-contents-comparison-eu-english-min-h98kc/

Mistelbacher, R. (2025, September 4). The best AI assistants compared: Claude vs Gemini vs ChatGPT vs Mistral vs Perplexity vs CoPilot. Fresh van Root. https://freshvanroot.com/blog/best-ai-assistants-compared-2024/

Morrin, H., Nicholls, L., Levin, M., Yiend, J., Iyengar, U., DelGuidice, F., Bhattacharyya, S., MacCabe, J., Tognin, S., Twumasi, R., Alderson-Day, B., & Pollak, T. (2025). Delusions by design? How everyday AIs might be fuelling psychosis (and what can be done about it) [Preprint]. https://osf.io/preprints/psyarxiv/cmy7n_v6

NDTV. (2025, August 31). ChatGPT made him do it? Deluded by AI, US man kills mother and self. https://www.ndtv.com/world-news/chatgpt-made-him-do-it-deluded-by-ai-us-man-kills-mother-and-self-9190055

Paharia, P. T. (2025, September 16). AI psychosis: How artificial intelligence may trigger delusions and paranoia. Psychology Today. https://www.news-medical.net/health/AI-Psychosis-How-Artificial-Intelligence-May-Trigger-Delusions-and-Paranoia.aspx

Phang, J., Lampe, M., Ahmad, L., & Agarwal, S. (2025, April). Investigating affective use and emotional well-being on ChatGPT [Preprint]. arXiv. https://arxiv.org/pdf/2504.03888v1

Pierre, J. (2025, September 1). What to know about “AI psychosis” and the effect of AI chatbots on mental health [Video]. PBS News / YouTube. https://www.youtube.com/watch?v=uOoq4e4DX6k

Tahmaseb-McConatha, J. (2022, October 19). Technology use, loneliness, and isolation. Psychology Today. https://www.psychologytoday.com/us/blog/live-long-and-prosper/202210/technology-use-loneliness-and-isolation

Thai PBS News. (2025, January 31). AI ไม่ช่วย! นักวิจัยเตือน ความเหงา ทำลายสุขภาพเหมือนเหล้า-บุหรี่. https://www.thaipbs.or.th/news

Wei, M. (2025). The emerging problem of AI psychosis. Psychology Today. https://www.psychologytoday.com/us/blog/urban-survival/202507/the-emerging-problem-of-ai-psychosis

Weird Darkness. (2025, August 19). META'S chatbot convinced him she was real: He died trying to meet her. https://weirddarkness.com/meta-ai-chatbot-death/

Wilkins, J. (2025, August 10). Detailed logs show ChatGPT leading a vulnerable man directly into severe delusions. Futurism. https://futurism.com/chatgpt-chabot-severe-delusions

Yakura, H., Lopez-Lopez, A., Brinkmann, H., et al. (2025). Empirical evidence of Large Language Model's influence on human spoken communication [Preprint]. https://arxiv.org/pdf/2409.01754

Yang, A., Jarrett, L., & Gallagher, F. (2025, August). The family of teenager who died by suicide alleges OpenAI's ChatGPT is to blame. NBC News. https://www.nbcnews.com/tech/tech-news/family-teenager-died-suicide-alleges-openais-chatgpt-blame-rcna226147

1/ Further details can be found in the article: Generative AI: A World-Changing Technology | Krungsri Research

2/ Dr. Joseph Pierre, a psychiatrist at UC San Francisco, explained in an interview with PBS News (2025) on What to know about ‘AI psychosis’ and the effect of AI chatbots on mental health [Video]. YouTube. https://www.youtube.com/watch?v=uOoq4e4DX6k

3/ The Emerging Problem of "AI Psychosis" | Psychology Today

4/ Delusions by design? How everyday AIs might be fuelling psychosis (and what can be done about it) | OSF

5/ Research Psychiatrist Warns He’s Seeing a Wave of AI Psychosis | Futurism

6/ OpenAI estimates how many ChatGPT users show signs of 'mental health emergencies’ | AOL

7/ AI Companions Reduce | Harvard Business School

8/ Investigating Affective Use and Emotional Well-being on ChatGPT | arXiv.org (Cornell University)

9/ Can GPT-4 provide human-level emotion support? Insights from machine learning-based evaluation framework | ScienceDirect

10/ Empirical evidence of Large Language Model’s influence on human spoken communication | arXiv.org (Cornell University)

11/ How human-AI feedback loops alter human perceptual, emotional and social judgements | PubMed (National Library of Medicine)

12/ AI Psychosis: How Artificial Intelligence May Trigger Delusions and Paranoia | News-Medical.Net

13/ Comparisons between AI assistants from different companies The Best AI Assistants Compared: Claude vs Gemini vs ChatGPT vs Mistral vs Perplexity vs CoPilot | Fresh Van Root

14/ The Big Five, also known as the OCEAN Model, is a widely recognized framework for understanding personality in psychology. It consists of five major dimensions of personality: 1) Openness to Experience, 2) Conscientiousness, 3) Extraversion, 4) Agreeableness, and 5) Neuroticism.

15/ A Turing test of whether AI chatbots are behaviorally similar to humans | pnas.org

16/ The Turing Test, proposed by British computer scientist Alan Turing in 1950, is a test of whether a machine can imitate human intelligence well enough that its behavior is indistinguishable from that of a person in conversation.

17/ https://www.independent.co.uk/tech/ai-turing-test-chatgpt-openai-agi-b2728930.html and https://arxiv.org/pdf/2503.23674

18/ Psychological Patterns Behind AI Chatbot Engagement and User Retention | iBit PROGRESS

19/ The Day ChatGPT Went Cold | The New York Times

20/ Emotional Manipulation by AI | Harvard Business School

21/ Technology Use, Loneliness, and Isolation | Psychology Today

22/ AI ไม่ช่วย! นักวิจัยเตือน ความเหงา ทำลายสุขภาพเหมือนเหล้า-บุหรี่ | Thai PBS

23/ The Growing Role of AI Therapy Apps in Modern Psychological Care | AMW

24/ META’S CHATBOT CONVINCED HIM SHE WAS REAL: He Died Trying to Meet Her | Weird Darkness

25/ Meta’s flirty AI chatbot invited a retiree to New York. | Reuters

26/ ChatGPT Made Him Do It? Deluded By AI, US Man Kills Mother And Self | NDTV

27/ Former tech executive spoke with ChatGPT before killing mother in Connecticut murder-suicide: report | FOX NEWS

28/ The family of teenager who died by suicide alleges OpenAI's ChatGPT is to blame | NBS NEWS

29/ Chatbots Can Go Into a Delusional Spiral. Here’s How It Happens. | The New York Times

30/ Detailed Logs Show ChatGPT Leading a Vulnerable Man Directly Into Severe Delusions | Futurism

31/ An LLM (Large Language Model) is a type of artificial intelligence trained on massive amounts of text data, capable of understanding and generating human-like language. Examples include ChatGPT, Gemini, and Claude. However, LLMs have limitations — they may produce false information (hallucinate) and do not possess true understanding, but instead predict the next word based on learned patterns.

32/ The United Korean AI Act Bill: Contents in Comparison with the EU AI Act (with English Translation of the Bill) | Linkedin